THE FACTS

In December 2025, someone broke into the Milton Keynes Buddhist Vihara and stole £3,000 in cash and jewellery—money earmarked for Sri Lanka’s flood relief fund. Surveillance cameras captured the thief on video. Thames Valley Police needed a name, so they ran the footage through the UK’s retrospective facial recognition system.

The system, built by German firm Cognitec, runs approximately 25,000 searches per month against 19 million mugshots held on the Police National Database. It returned a match: Alvi Choudhury.

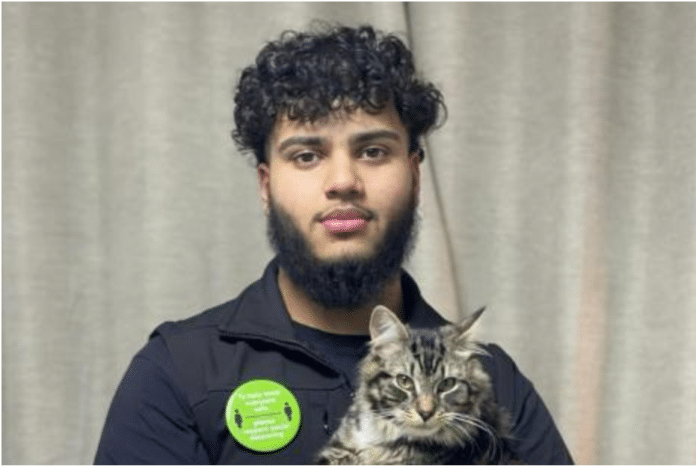

Choudhury is a 26-year-old software engineer living with his parents in Southampton—a city roughly 100 miles from Milton Keynes. He had never visited Milton Keynes. He had no criminal record of any kind. His mugshot was only in the police database because he had been wrongly arrested in 2021 after being attacked on a night out in Portsmouth. Police released him with no further action that time. They told him his information would be removed from the system. His DNA was deleted. His face was not.

On January 7, 2026, Choudhury was working remotely at home, on a call with a client, when Hampshire Constabulary officers—acting on behalf of Thames Valley Police—knocked on his door. He opened it with a smile. They handcuffed him. They would not let him collect his coat. They searched his parents’ home and his phones. He was taken into custody at around 4pm and placed in a cold, dark cell.

Nobody spoke to him for hours. He was not interviewed until around midnight. The interview took approximately ten minutes. That was all the time investigators needed to realise the man in the CCTV footage was not Alvi Choudhury. He was released at 2am.

Choudhury says officers were laughing at the footage when they let him go. One officer told him she knew he was not the suspect before the interview even started—she had compared the CCTV image to his custody photo and seen it immediately. The burglar in the video was visibly younger, with lighter skin, a larger nose, no facial hair, and smaller lips. The only shared characteristic, Choudhury says, was curly hair.

Five days after Choudhury’s arrest, a 23-year-old man named Eduard Zlatineanu was taken into custody for the same burglary. He pleaded guilty at Aylesbury Crown Court.

THE BLAME

Thames Valley Police’s own guidelines—along with the National Police Chiefs’ Council’s official position—are clear: facial recognition matches are intelligence, not evidence. They are investigative leads, not grounds for arrest. The Cognitec system returns a probability. A human is supposed to do the rest.

The human, in this case, did not do the rest. An investigating officer looked at the software’s output, compared it to Choudhury’s mugshot—taken more than four years earlier—and decided, without any corroborating investigation, that this was enough to arrest someone 100 miles away. No phone call. No alibi check. No attempt to determine whether Choudhury was anywhere near Milton Keynes on the day of the burglary. Just a machine’s guess and a glance at two photos of men who share a skin colour and a hair type.

What makes this worse is context. In December 2025—the same month as the burglary—a Home Office-commissioned assessment by the UK’s National Physical Laboratory revealed that the Cognitec algorithm used by police produces false positive rates of 4% for Asian faces and 5.5% for Black faces, compared to 0.04% for white faces. That is a hundred-fold disparity. The software version in use, FaceVACS-DBScan 5.5, dates from 2020. Cognitec has released multiple upgrades since. The Home Office never updated it.

Thames Valley Police admitted to Choudhury that his arrest “may have been the result of bias within facial recognition technology.” In the same breath, an officer told him that because facial recognition was already under strategic review, she did not feel the need to flag his case for broader organisational learning. The problem was known. The response was to file it away.

Choudhury’s lawyer, Iain Gould of DPP Law, summarised the situation with precision: the police had been playing “AI lottery with people’s lives.”

THE PATTERN

Choudhury is not an anomaly. He is the latest entry in a growing ledger of facial recognition false arrests that disproportionately target people of colour. In the United States, Robert Williams, Porcha Woodruff, and Randal Reid were all wrongly arrested after police treated algorithmic matches as case-closed conclusions. In the UK, South Wales Police recently paid damages to a Black man wrongfully arrested and held for 13 hours after a facial recognition error. Anti-knife crime campaigner Shaun Thompson was detained by the Metropolitan Police after another false match. The Equality and Human Rights Commission has intervened in his case.

The pattern is always the same. Software flags a face. A human glances at two photos. The human sees the generic—same race, similar build—rather than the specific. The arrest follows. The apology, if one comes at all, blames the technology. And the institution insists the arrest was “lawful.”

Choudhury now fears that because he has been arrested a second time—and a second mugshot has been entered into the system—the cycle will repeat. He holds Home Office and Metropolitan Police security clearance for his work. Both arrests must be disclosed. He worries about professional consequences from events he did nothing to cause.

| THE VERDICT | |

| The System | Cognitec FaceVACS-DBScan ID v5.5 (retrospective facial recognition), procured by the UK Home Office, deployed via the Police National Database. Software version dates from 2020. Known racial bias documented by the National Physical Laboratory in December 2025. |

| The Decision-Maker | Unnamed investigating officer, Thames Valley Police, who approved arrest based on software match and visual comparison of two photographs. |

| The Victim | Alvi Choudhury, 26, software engineer, Southampton. No criminal record. Held ten hours. Mugshot in system from previous wrongful arrest in 2021. |

| Accountability Status | Pending. Choudhury is pursuing a civil damages claim against Thames Valley Police and Hampshire Constabulary via DPP Law. The Equality and Human Rights Commission has flagged systemic concerns. Thames Valley Police maintains the arrest was lawful. No officer has been disciplined. |

| Blame Rating | ★★★★☆ — The algorithm flagged a face. Humans arrested a person without doing ten minutes of detective work that would have cleared him. Then they called it lawful. |